|

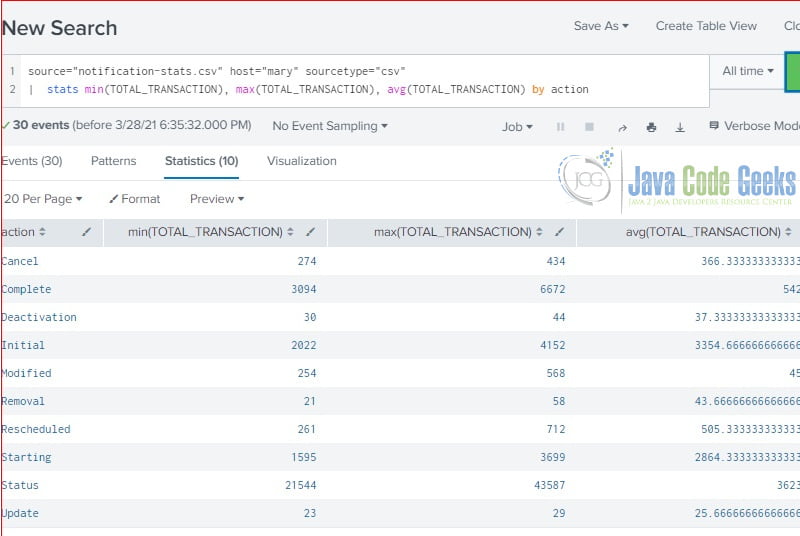

Once the DM is finished accelerating to 100%, then try running this: | tstats count where index=_internal by sourcetype sourceĪnd note how much faster this is compared to index=_internal | stats count by sourcetype source Pick a window big enough like 7 days and search the last 24 hours for testing. Now accelerate the internal_server DM if you haven't already. You tell tstats which DM to use with the from datamodel=internal_server clause. If you wanna "see" what's inside these tsidx files then you can do something like. If the data model is accelerated then the new *.tsidx indexed files are created on the indexers at $SPLUNK_DB$//datamodel_summary/_//DM_. Don't do "Last X minutes" since the time range will be different when you run the search ad-hoc. Make sure you use the same fixed time range (ie from X to Y). You can verify that you'll get the exact same count from both the tstats and normal search. The translation is defined by the base search of the DM (under "Constraints"). If the DM isn't accelerated then tstats will translate to a normal search command, so the above command will run: index=_internal source=*scheduler.log* OR source=*metrics.log* OR source=*splunkd.log* OR source=*license_usage.log* OR source=*splunkd_access.log* | stats count Note that I use the DM filename internal_server (ie Object ID), not the "pretty" name. įirst, run a simple tstats on the DM (doesn't have to be accelerated) to make sure it's working and you get some result: | tstats count from datamodel=internal_server Since this seems to be an popular answer, I'll get in even more details:įor our example, let's use the out-of-the-box data model called "Splunk's Internal Server Logs - SAMPLE" at. Generally, I recommend using accelerated data models.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed